AI Notetakers Are Quietly Becoming The Biggest Insider Threat For Enterprises.

The M&A team is on a virtual call with their legal advisors discussing a critical asset acquisition. The discussion covers asset valuation, undisclosed clinical trial results, and term sheet sensitivities that have not yet been shared with anyone. Somewhere on that call, an AI meeting assistant joins silently, begins transcribing, and sends the transcribed conversation to a third-party large language model for summarization. No one in the room has authorized this. No one even noticed the presence of this bot.

This isn’t a scenario from a cybersecurity thriller. It is happening in enterprise environments every single day.

Following World IP Day on April 26, we celebrate the power of ideas and the legal frameworks built to protect our IP. But as the tools we utilize become more intelligent, they also become harder to secure and govern. The question facing CISOs, legal leaders, and compliance officers today is not whether AI notetakers are useful. But what these tools are actually doing with the information they capture.

THE PRODUCTIVITY ILLUSION

AI meeting assistant adoption took off rapidly. Tools like Otter, Fireflies, and copilot-style solutions embed natively in Microsoft Teams and Zoom and have promised to eliminate the friction of manual notetaking.

These miraculous plug-ins show up in every call, capture everything, miss nothing – even automate follow-ups. This is the efficiency that every distributed enterprise team always needed.

However, convenience and safety are not the same thing, and the race toward productivity has outpaced any meaningful appetite for scrutiny. Many of these solutions have opened a “Pandora’s box” for legal and cyber challenges.

A SILENT OBSERVER WITH PERSISTENT MEMORY

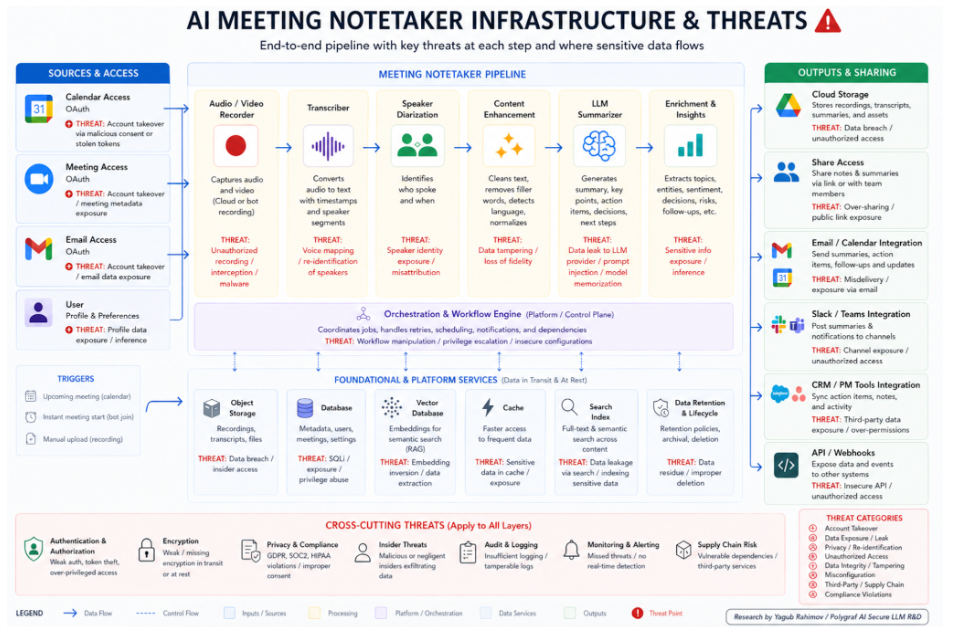

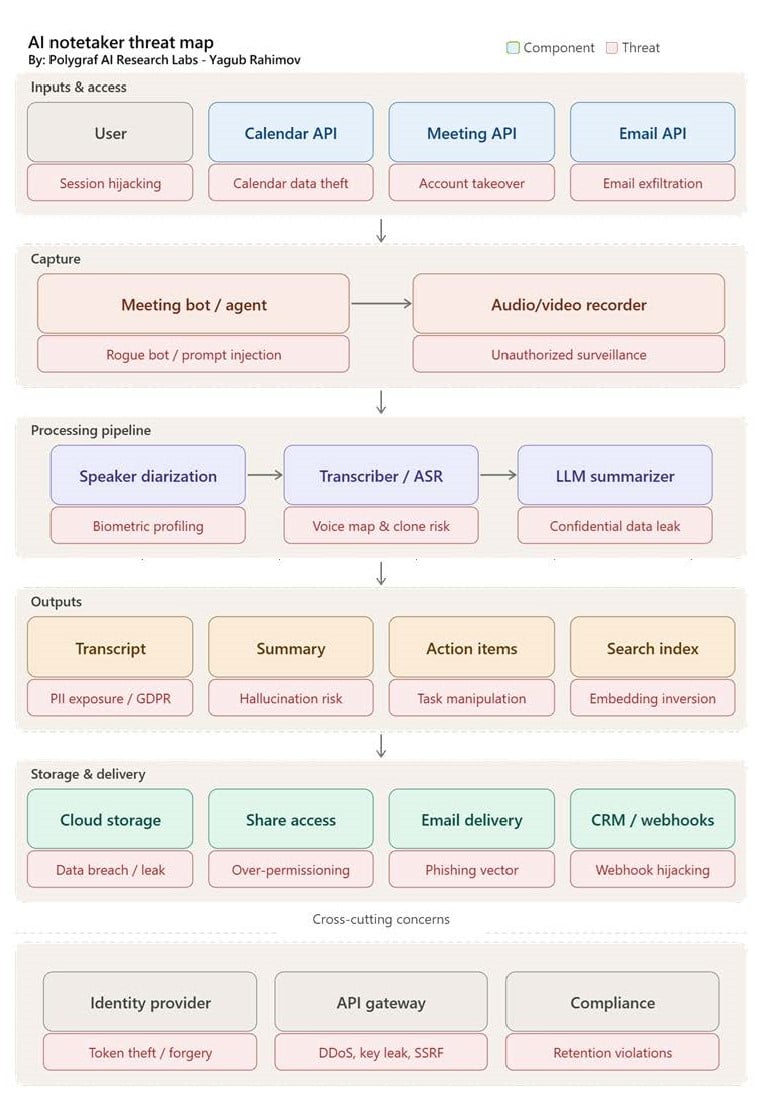

What AI notetakers actually do is far more consequential than what they are marketed as or what people can see. They don’t just capture notes. They transcribe complete conversations in real time. They summarize, extract action items, and increasingly apply sentiment and intent analysis. They get OAuth grants to your email and calendar. They store these, sync them to cloud environments, connect to email systems, and feed outputs into downstream workflows. Oftentimes, they involve external language models whose data-handling practices are opaque, variable, and rarely audited.

What users get, in reality, is a persistent third-party surveillance layer sitting inside your most sensitive business conversations: client meetings, strategy planning sessions, board strategy sessions, product roadmap reviews, regulatory responses, and legal holds. And they are stored and controlled outside your enterprise perimeter.

An AI notetaker does not know the difference between what is a trade secret, a legal responsibility or a general introduction call. It captures everything with equal fidelity.

This is the core of the risk: these tools were not designed with IP sensitivity or critical communications as a governing principle. Notetakers were designed for capture and convenience, not for compliance. Meanwhile, the traditional enterprise security was built around an entirely different threat model. Legal and risk teams are now left puzzled; they cannot fight what is done. Yesterday is history, but tomorrow cannot be a mystery.

THE ATTACK SURFACE NOBODY MAPPED

Beyond the passive risk of data flowing to unintended destinations, AI notetakers introduce a layered set of active attack vectors that most security teams have not yet fully inventoried.

The most underappreciated of these is OAuth. To function, these tools typically request broad permissions to access your calendar, email, and meeting platform. Each of those connections is a potential pivot point – and a catastrophic attack vector. A compromised OAuth token does not just expose the notetaking tool; it gives a pathway into your broader enterprise identity infrastructure. We have seen what an OAuth failure did with Vercel. Token reuse, inefficient scope restrictions, and inadequate revocation policies mean that a single misconfigured integration can and will open an unimaginable OAuth catastrophic attack vector.

Then there is the question of who, exactly, joins these meetings. AI bots are notoriously easy to spoof or impersonate. There are documented cases of unauthorized participants joining enterprise meetings via manipulated calendar invitations or misconfigured meeting links. Once these malicious actors gain access, they will record, summarize, and exfiltrate without triggering a conventional security alert. Network and endpoint tools register a connection, not a context. In the AI age, context is everything.

There is also a growing dimension in which participants consult AI systems in real time during recruitment interviews, vendor evaluations, or sensitive negotiations. In those scenarios, AI tools may influence or distort outcomes without any disclosure to other participants, creating governance and fairness liabilities that extend well beyond data security. Especially if the outcome has an impact on health and financial decisions.

CYBER AND LEGAL THREAT MAP

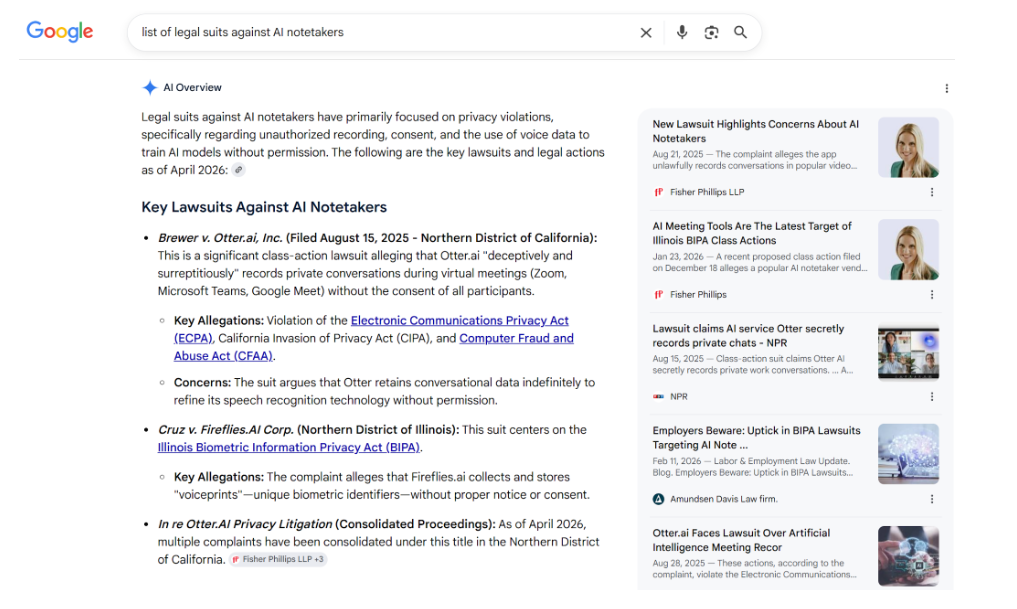

The legal threat here is not theoretical. Many jurisdictions operate under all-party or two-party consent requirements for recording conversations. Let’s take California as an example. California’s Invasion of Privacy Act requires every participant to consent before a conversation is recorded. Illinois, Connecticut, and a growing list of other states have analogous statutes. On the other hand, in cross-border conversations, the European Union’s General Data Protection Regulation (GDPR), introduces additional obligations for enterprises, including consent requirements, data minimization principles, and restrictions on transfers to third-country processors. Most notetakers fail to meet at least some of these legal requirements. A simple Google search with [{insert your notetaker name} lawsuit] will show you how many legal cases have been filed:

Despite enterprises having privacy compliance policies, the practical challenge with AI notetakers is that most deployments occur at the individual user level, not at the enterprise governance level. A team member signs up for a tool, connects it to their calendar, and begins using it across internal and external calls, often without informing participants, often without legal review, and pretty much never with any sort of data processing agreement that would survive regulatory scrutiny.

The liability is not only regulatory. In litigation, transcripts generated by AI tools can become discoverable, as courts have ruled that foundation solutions must retain data even if it is “deleted.”

The question of who owns those transcripts, and what rights the notetaker vendor retains to use them for model training, is rarely addressed in standard terms of use. Several major AI productivity platforms even boldly proclaim that they reserve broad rights to use interaction data for product improvement – that means training and retraining. A clause and term that most enterprise legal teams have never reviewed in the context of meeting notetakers.

TRUST IS BROKEN

Every CISO has spent their career securing the network perimeter. And almost every one of them has overlooked securing the conversation itself.

There is a dimension to the notetaker risk that transcends data governance. That is the erosion of trust in digital collaboration itself. We already see this everywhere – AI-generated content is becoming increasingly sophisticated, and the risk of deepfake impersonation in virtual meetings is becoming part of everyday operational risk too. A convincing voice or video replica of an executive that joins a call alongside an AI transcription tool, creates everything needed for sophisticated social engineering at a scale that was not possible three years ago – including voice biometrics, tonality, personality, word choices, and more.

In a world where multi-million dollar M&A transactions, litigation strategy, and regulatory negotiations happen over Zoom, Google meet or Teams calls, that fracture, even with minute probability, can bring massive consequences.

TRADITIONAL SECURITY ARCHITECTURES ARE BLIND NOTETAKERS

The security stack most enterprises rely on was built to protect infrastructure – the middle layer of the cybersecurity pyramid. These tools include firewalls, endpoint detection tools, data loss prevention systems, and SIEM platforms. Each of these elements is designed around network traffic, endpoints, and structured data flows. They are neither designed nor equipped to evaluate the semantic content of that conversation, or to recognize that an AI tool has just captured a contextually critical discussion with undisclosed intellectual property and routed it to an external processor that will retain, retrain, and, when subpoenaed, will share that data with the court, too.

A NEW OPERATING MODEL FOR NOTETAKER AI GOVERNANCE

Banning notetakers outright is neither practical nor productive for enterprises. What is needed is a dedicated AI context security protocol that treats AI interactions as a governed, auditable layer of enterprise activity, applying the same scrutiny to AI-mediated conversations similar to email, file sharing, and data access governance solutions.

This means establishing clear policies for which AI tools can connect to which meeting environments, including:

- auditing OAuth permissions with the same rigor applied to privileged access management.

- requiring data processing agreements with every AI vendor that touches video calls.

- building oversight mechanisms capable of evaluating AI behavior in context, flagging anomalies in real time rather than discovering them during a breach investigation or a regulatory inquiry.

- understanding the contextual value of what is happening.

- handling all policies and data within the enterprise’s internal infrastructure with no external touchpoints.

The shift required is from detection to control, and from reactive governance to inline, behavioral oversight of AI interactions real-time, inline and complete data sovereignty, that also means on-prem-operation. This is not a future-state ambition. This is the present-day operational requirement today.

THE IP vs. AI REALITY

Intellectual property laws were built on a simple principle: your proprietary ideas have value, and that value deserves protection. In modern day, any tool that captures, stores, and processes the conversations in which those ideas are formed deserve the same level of governance as any other enterprise asset. But it isn’t that easy!

AI productivity tools aren’t going away soon. But “the trust in a silver tray” assumption is long gone. Security and legal leaders can no longer afford ignoring the silent elephant in the room. Conversations and sales calls are happening every minute, and the IP that defines your competitive position tomorrow, or privileged information that makes tomorrow possible, could be discussed within them.

Adversary infiltration isn’t the only threat CISOs must look out for, the assistant you voluntarily invited to the table could be your biggest risk today.

The question isn’t whether organizations should use AI notetakers. The question I think you should ask is who else in the room is using it, where that information goes next, and what your enterprise’s privileged data is worth.

____________________________________________________________________

REFERENCES & LEGAL CONTEXT

California Invasion of Privacy Act (CIPA), Cal. Penal Code sec. 630 et seq. Requires all-party consent for recording confidential communications.

EU General Data Protection Regulation (GDPR), Regulation (EU) 2016/679. Articles 5, 6, 28, and 44 govern data minimization, lawful basis, processor agreements, and cross-border transfers.

NIST AI Risk Management Framework (AI RMF 1.0), January 2023. Provides governance guidance for managing risks associated with AI systems in organizational contexts.

Electronic Communications Privacy Act (ECPA), 18 U.S.C. sec. 2510-2523. Federal baseline for interception of wire and electronic communications.

Illinois Eavesdropping Act, 720 ILCS 5/14-2. Prohibits recording conversations without consent of all parties; frequently cited in AI transcription compliance contexts.

____________________________________________________________________

About the Author

Yagub Rahimov is the founder of Polygraf AI and a globally recognized expert in AI security, data governance, and enterprise risk. He advises highly regulated and critical operations organizations navigating the intersection of artificial intelligence, sensitive data protection, and regulatory compliance.

Join our LinkedIn group Information Security Community!